During Oliver Wyman’s recent Data Analytics Capabilities Study (DACS), a repeated observation was made regarding differences in certain analytical results. As one Chief Data Scientist commented, “We can end up with analytical outcomes that may as well be apples, bananas, and screwdrivers as they are so hard to compare or reconcile”. This raised the question as to why this phenomenon occurs and what kind of organizational arrangements represent best practices to circumvent.

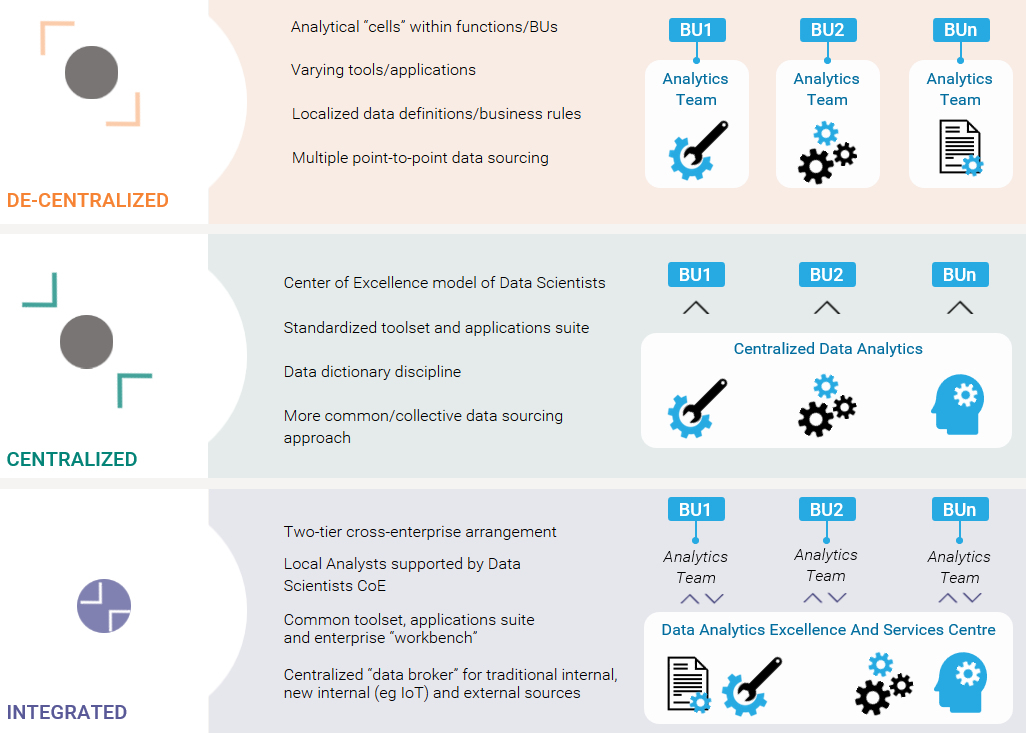

A number of key design principles are shaping the successful implementation of data analytics at leading organizations. Foremost is the use of an integrated operating model. Most firms begin with a de-centralized or centralized model for data analytics, both of which have flaws that impede collective transformation into a data-driven organization. A typical evolution follows three states:

- De-centralized (or “federated”). Fragmented cells or silos of data scientists conduct analysis on their own terms, with their own tools, often sourcing data in a varying duplicative ways.

- Centralized. Highly skilled data scientists represent a form of Center of Excellence (CoE) or Factory, plowing through an analytics demand queue across business and functional areas. These Data Scientists also have to deal with multiple data-management formats from different units.

- Integrated. Data analytics is integrated, with the CoE positioned to enable organization-wide analytics excellence; tackle the trickiest, most complex and often most innovative modelling challenges; and act as a data broker to source and curate data on behalf of the enterprise’s analytical community. In this arrangement, Data Scientists act as enablers to the broader community, creating specialized tools, improving and refactoring models for stronger or more efficient performance and enabling models to have wider applicability and fungibility across the organization. This essentially democratizes analytical capabilities, allowing a much broader group (of ‘non data scientists’) across the organization to benefit from a rich palette or workbench of tools and datasets.

The de-centralized (or federated or siloed) arrangement is arguably a “let a 100 flowers bloom” approach: different types of data collection, different business rules and extract or enrichment logic, different models and ultimately quite different outcomes. Each organizational cell works well at its own level and in its own specific domain, but this is very hard to scale such arrangements. Plus, a huge potential downside is that manufacturing, sales, R&D, retail etc. are working in silos with little or no transparency between groups or fungibility of insight. As one of the Oliver Wyman study contributors remarked “we find it particularly hard to share because analysts have to learn so much about the tools, datasets, software applications, and ‘norms’ of other analysts in other parts of the organization”

Moving to a centralized model, where one central entity conducts analytics on behalf of the organization, creates its own problem. This lab or factory can become too distant from the business lines or functions it has been setup to serve, missing out on the context, nuances, or certain special data associated with the particularities of a given organizational area. More notably, the over-concentrated nature of analytical resources can create a backlog or bottleneck which can frustrate organizational units deemed lower-priority or can result in a form of ‘batch processing’ which stifles innovation insight or agility.

The integrated model represents a best-of-breed approach. Data Scientists can build analytical assets to reshape models, making them faster and more intuitive—and put them on desktops so everyone (not just the Data Scientists) can ask and answer their own questions through a powerful analytical workbench. This model is arguably the most flexible, productive, and democratic of the three.

Paul Mee, Partner, describes the value of an integrated approach to analytics.

Figure 1: The Convergence to an Integrated Model

Transitioning to an integrated model is not easy, in terms of governance and apparent control, as it requires a new organizational mindset toward data and analytics that serves the common good and individuals. But looking at firms that have succeeded, we observe a number of steps on the path to success.

- Start small and act quickly. Without trying to work across the entire enterprise, form a ‘club’ of smart analysts. Build a group that will share: expertise, fungible models, and streams of data. Use that nucleus as a platform for expansion and analytics innovation.

- Envision and prepare for a more robust architecture. Consider if you were to rethink the standard architecture (or distinct parts of it), what tools would you need, what data (sources, formats and fidelity) would scientists want (today and tomorrow potentially), what capabilities would organization units need and benefit from the most? Avoid retrofitting or carrying forward historical baggage - get creative, lean, and connected

- Build a case for action. A business case is not always a financial case, but will articulate the value a new improved arrangement that is faster, less wasteful, and more commonly valuable and valued by the organization. As an example, an insurer modeled the propensity to file a health-insurance claim, and then applied the same model to accident claims. Similarly, a major telco enterprise developed a pricing model for phone charges—then applied an enhanced version of the model to cable TV, then for bundles, then for certain social-economic groups, then for value-adding services and affiliates and so on. As different teams gradually work to use data and models in a consistent way, the integrated model begins to take hold and make an increasingly bigger difference to the analytical and decision making abilities of the enterprise

- Tackle the fundamentals around data. When one group creates a useful model in terms of uplift, other groups will likely want to leverage it. But there can be barriers to scale based on data. The integrated model relies on data accessibility, fidelity and quality. And, the provision of this in an efficient manner, for example avoiding having a hundred ‘rope bridges’ where data highway is needed. Where the integrated can act as form of Data Broker to source, curate and enrich data on behalf of a broad analytical community efficiency and efficacy benefits will be realized.

- Tell a story of success. If what has been done moves the needle, tout it. Develop a narrative around applied analytics to a specific business case (or two) and elevate it to the C-suite. Demonstrating in clear terms how much better things are now compared to the past represents potentially excellent PR for the data scientist community of an enterprise. As one major bank asserted, “we have learned to analyze, publicize and promote the impact our data analytics capabilities is making”

Just being able to get apples-to-apples numbers puts us in a much stronger position to be able to complete the analysis needed and make related decisions with confidence.2016 Data Analytics Capabilities Study Participant