Bon Jovi lyrics aside, it’s hard for consumers to find a good doctor. You might expect major healthcare organizations’ measurement teams have great tools and information to identify the “best” physicians. But this isn’t the case.

Most primary care physicians we’ve talked to admit they refer patients to specialists based on who they know, and general impressions of whether they are capable, based on physicians’ demeanors and responsiveness, with no information about specialists’ quality, outcomes, or objective performance measures. Similarly, network teams at health insurers use mostly credentialing, brand names, geographic adequacy, and negotiated prices to structure their networks.

For the average healthcare consumer in search of a physician, particularly a specialist, it may seem like physicians and health plans intentionally make their information hard to find. The truth is, physicians, health plans, and other organizations have little ability to evaluate whether one specialist is “better” than the next. The reason? Healthcare lacks a coherent measuring stick against which to assess “good” performance.

The world of “objective” physician measures is increasingly crowded. Everyone claims to have meaningful objective measures. Payers and providers are bombarded with measures – the Centers for Medicare & Medicaid Services Stars (CMS Stars), the Healthcare Effectiveness Data and Information Set, Leapfrog, CAHPS, total cost of care, episodic groupers, bundled costs, risk scoring, and adjustment – the list goes on. While each of these is useful for a specific purpose, none tell consumers if a physician delivers good care.

Did the patient receive a smoking cessation pamphlet? What about a fall-risk assessment? These questions are important process components, but they aren’t primary drivers of quality care.

Finding a Good Doctor’s Like...Finding a Good Car

When car shopping, would you find it helpful to know each tire’s average pressure, the number of average gallons in the gas tank last month, or how much windshield washer fluid’s left? These are all objective measures. With them, you could create a combined score for the vehicle and compare those individual measures and combined score to the scores of other vehicles. But these objective measures and scores wouldn't tell you anything about the vehicle’s safety, reliability, and performance relative to other vehicles. Sure, tire pressure is useful in knowing if you need more air, but not so much in deciding whether or not to buy the car.

Similarly, in healthcare a growing number of objective measures are useful – for specific questions. But there’s little relevance in evaluating who is “better” at delivering “good” medicine.

Healthcare’s Measures Just Skim the Surface

Take CMS’ Quality Payment Program, which uses 423 measures to assess providers nationally on quality, interoperability, and improvement activities. Too often, CMS measures activities that are either table stakes processes, or peripheral to the central question of care quality.

Did the patient receive a smoking cessation pamphlet? What about a fall-risk assessment? These are important process components, but like a car’s tire, they aren’t primary drivers of quality care. CMS seems to recognize existing measures are imperfect and incomplete – CMS awarded over $26 million last year to develop new, better quality measures. On the provider side, look at any operating dashboard and you’ll probably see most metrics – like capacity, throughput, cost per RVU, occupancy rates, and ratios of physicians to advanced practice clinicians – are focused on financial and operating performance, and don’t assess whether physicians are providing good patient care.

Here’s a Framework for Defining “Good” Medicine

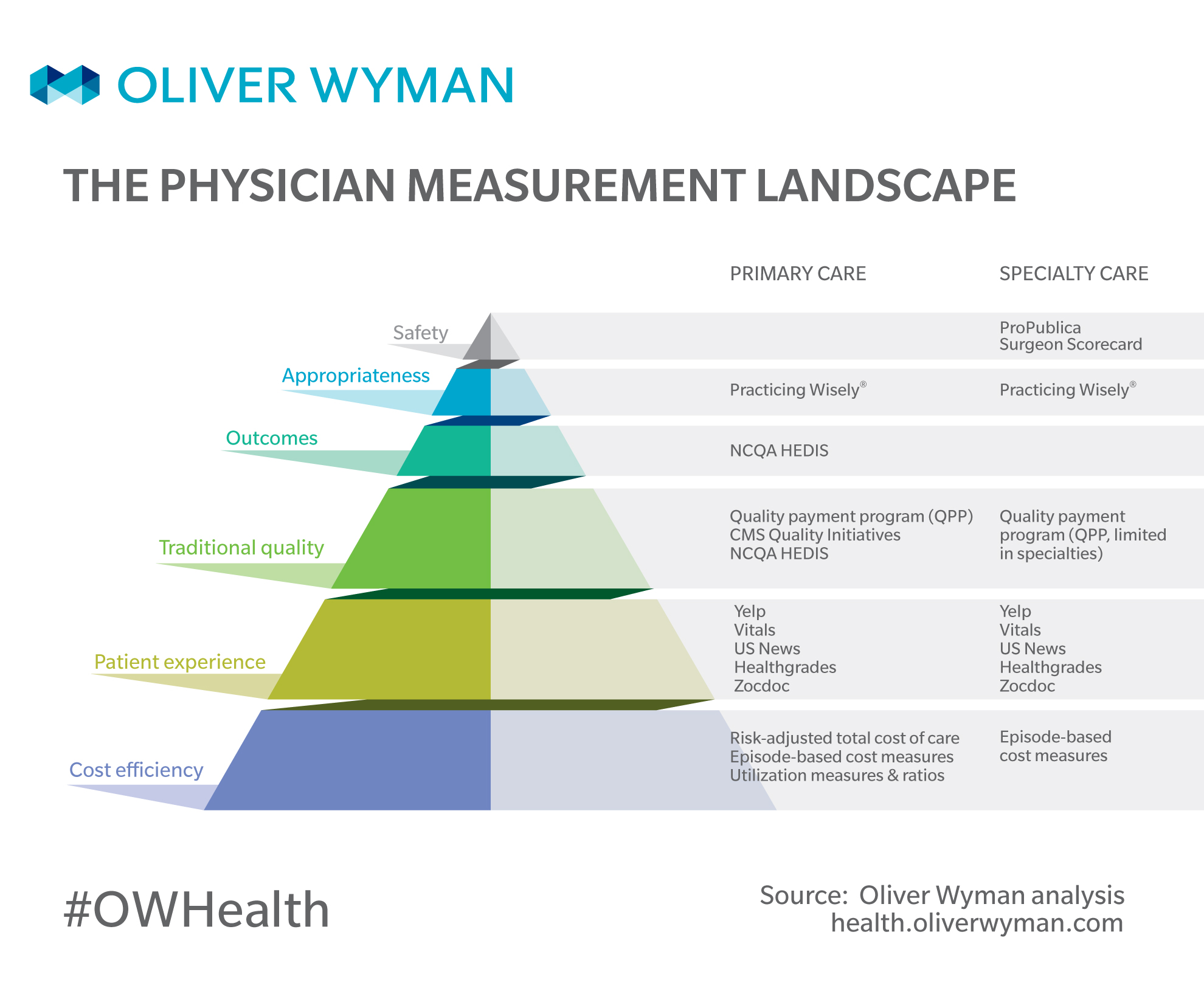

The Healthcare Measurement Hierarchy

1. Safety: First, do no harm. Does the physician expose patients to predictable and preventable harm, when there is minimal benefit? For example, hospital-acquired conditions, such as surgical site infections.

2. Appropriateness: Only do what's necessary. Does the physician deliver care consistent with evidence-based guidelines? When measuring appropriateness, it’s important to recognize care is contextual (for example, performing a back surgery on someone who has failed prior conservative treatment such as physical therapy may be appropriate, where performing back surgery on a patient presenting with a first episode of backpain is not). Thus, generalized measures that simply tally a physician’s use of potentially “low-value” procedures like back surgery are indiscriminate to whether the physician is using it appropriately. Of course, back surgeons are trained, well, to perform back surgery. Thus, appropriateness measures need to evaluate physician treatment decisions and performance in the context of the patient’s care journey. For example, subacromial decompression for shoulder pain is a surgery demonstrated to be no better than placebo under some circumstances. However, it's sometimes a necessary, valuable procedure when performed as part of a surgical shoulder repair located behind the acromion.

3. Outcomes. Are the surgeons’ operations successful? Do physicians’ patients live long, happy lives? How do you ensure a physician isn’t just operating on all low-risk patients who would have had good outcomes without the procedure? Not exactly easy to measure reliably. For example, risk-adjusted surgical complication rates.

4. Traditional Quality. Does the physician adhere to evidence-based care pathways and processes? For example, the percentage of women who had a cervical cancer screening with a Pap test, or the percentage of diabetics with well-managed HbA1c levels.

5. Patient experience. Is the physician responsive to a patient’s preferences, values, and time? For example, patient reviews and surveys.

6. Cost efficiency. Is the physician a good steward of the system’s resources? For example, risk-adjusted total cost of care, total cost per total knee replacement, or inpatient days per 1,000.

“Outcomes are what matter most – if the patient is better, what else matters?” is a common question echoing across the industry. The problem is, outcomes measures are almost exclusively based on a particular intervention. For example, take a surgeon’s knee replacement complication rates. These metrics commonly favor lower risk patients, but more importantly, they fail to ask: Was the surgery necessary in the first place? Perhaps those low-risk patients driving that surgeon’s excellent score might have had similar outcomes without the surgery and benefitted without the inherent risk, recovery, and substantial expense of the surgery. By adding the dimension of appropriateness (Was the surgery necessary?) to outcomes (Was the surgery effective?) a more complete picture emerges.

Although there's a need for better holistic measures, the category to which the industry has historically had the least visibility is Appropriateness. Thankfully, this is an area with increasing attention as industry players work to measure “waste” and “low-value” care indicators, and some even evaluate physician treatment decisions against evidence-based guidelines.

A central challenge facing those tackling the appropriateness space? Only a handful of healthcare actions are “always” or “never” the right thing to do. Most of healthcare is contextual, requiring physician judgement.

For example, a magnetic resonance imaging (MRI) can be appropriate for assessing a patient’s back pain when potential red flags for cancer are present. But, it is not recommended for patients with an initial diagnosis of uncomplicated back pain – studies have shown imaging uncomplicated back-pain cases leads to many false positives, unnecessary intervention, and unnecessary expense. Thus, when assessing the clinical appropriateness of care, generalized “low-value” care ratios (like, “MRIs per 1,000 patients”) fail to discriminate between clinically appropriate care and unnecessary care.

While these “low-value” care measures are helpful to highlight general watch-out areas, they fall short of evaluating the clinical context to truly assess whether care was appropriate or wasteful. For instance, if a low value-care indicator treats all MRIs of a particular nature as being “low-value” and potentially wasteful, versus considering whether the MRI was appropriate in the instance of a particular patient's point along his or her care journey.

So, What Defines a “Good” Measure, Anway?

Even when you’re measuring the right thing, several challenges hinder the ability to build good measures.

Individual vs. Group: A lot of measures assess physician groups, not individual physicians. That’s convenient for interacting with legal entities – but not helpful for patients, or to promote transparency, self-awareness, and – most importantly – accountability and improvement for physicians. Most physicians are employed by large health systems. Measuring the average would wash out the performance of an outlier physician – whether good or bad – with tens, hundreds, or even thousands of other doctors. As different as the local cultures and demographics of our country are, it remains that for nearly any measure we choose, we see more variability among the individuals in a large practice than we see between the averages of large practices or different geographies.

Sample size and continuum of care data: A good measure looks beyond office visit data to know what happened before the visit and after the visit to accurately evaluate a physician’s decisions and his or her decisions’ impact on the patient. Consider, for instance, a multi-payer database with longitudinal patient data. This is why we’re seeing a huge advantage for companies that combine data from CMS’ 100 percent Medicare database and commercial payers, to create the largest window possible into each physician’s practice, providing visibility to full continuum of care with the sample size needed for reliable assessment.

Tiering / classification: Large studies have shown flipping a coin is a better predictor of performance than most available measurement approaches are, due to the likelihood of misclassifying high and low performers through cost profiling programs.

In contrast, emerging approaches, like those developed by Practicing Wisely (an Oliver Wyman sponsored initiative to evaluate physicians on whether their care is supported by evidence-based guidelines) focus on measures that are specialist specific and evaluate specific clinical decisions. Thus, the general surgeon doesn’t qualify for the same measures as the colorectal surgeon, and vice versa. Further, the comparison base for a measure is the group of physicians that qualified for that measure because only that cohort faced the clinical decision in question, naturally causing the performance evaluation of the colorectal surgeon to be judged relative to other colorectal surgeons. This approach puts less pressure on inaccurate physician specialty taxonomies sitting in physician registries.

In the end, we suspect no taxonomy will be fine-grained enough to convey the range of different types of practices, sub-specialties, and care settings out there. Thus, the need to evaluate specific decisions relative to others facing that same decision.

Attribution logic: Attributing responsibility for utilization of MRI scanning to the radiologist responsible for reading the scan is misguided, leading to spurious results. Similarly, approaches that assign measure attribution to several providers for a single care decision just distributes a score across a broad set of physicians, independent of their care decision role. Developing thoughtful attribution logic, specific to each measure, that identifies the physician responsible for the care decision is necessary to accurately assess performance, drive acceptance of performance measures, and create meaningful provider engagement with the results.

Benchmarking: Many benchmarking measurement systems just compare heterogenous peer groups. For example, potentially mixing oncologists with cardiologists for transthoracic echocardiograms, or orthopedists with primary care physicians for MRIs, and the like, who might be performing the same procedure – but under very different circumstances. Measures also tend to use peer distributions to set benchmarks, trying to steer everyone to get to “above average.” There are measures where most of the country is doing the right thing, and others – like opioid prescribing – where there is still rampant overuse across most of the system. Using a mix of peer benchmarks as well as evidence-based guidelines to evaluate “good” practice is important to address areas where there is a need to move systemic patterns of misuse.

What Impacts Total Cost Trend?

Quality aside, payers, patients, and even providers are increasingly trying to use measures to solve the cost conundrum. How can we lower the cost of care without sacrificing quality? Until recently, the only variable predictive of total cost of care was historical risk-adjusted total cost of care. That’s been acceptable for primary care physicians, but it’s been insufficient for measuring specialist efficiency. Two examples of where traditional cost-rooted measurement and incentive programs have been well intentioned but lead to unintended consequences include:

1. Incentives. When payers use episode groupers to incent physician efficiency via bundled payments, total cost of care can actually increase. While bundles effectively incent efficient care within the episode, specialists in these payment models tend to do more procedures on lower risk patients to trigger the episode and qualify for payment.

2. Unit price. The more CMS and payers squeeze margins on the prices of surgery, the more unnecessary care is said to grow – providers will use volume to replace margin. For instance, surgeons off-the-record emphasize the best way to reduce unnecessary care is to stop putting pressure on unit prices and to focus on ways to link payment to better care.

"Only a handful of healthcare actions are 'always' or 'never' the right thing to do. Most of healthcare is contextual, requiring physician judgement."

Oliver Wyman’s Key Appropriateness Finding

Measures of appropriateness are solving this conundrum. Practicing Wisely has some compelling findings. For instance, Practicing Wisely’s appropriateness scores have been actuarially validated to identify providers who are not only delivering more evidence-based care but are also predictive of total cost of care, above and beyond the predictive power of risk-adjusted historical total cost of care. Perhaps unsurprisingly, specialists who deliver care that’s better-aligned with evidence-based guidelines also deliver care that’s lower cost overall.

Good measures make it possible to do the right thing for patients – proving that doing the right thing improves quality and reduces the cost to our healthcare system. As the industry becomes more enlightened on the need to create “good” measures which evaluate better care for patients, we move a step closer to the goal of “good medicine."